On today’s post we will be creating our Serverless REST API with Azure Functions. We will be doing that with Visual Studio.

This post is part of a series where the first part should be read beforehand.

Creating the “basic MM Service”

For this we have to install the latest Azure Functions Tools and an operative Azure Subscription. We can do this from Visual Studio, from either “Tools” and then “Extensions and Updates”, we should either install or update the latest “Azure Functions and Web Jobs Tools” (if we are using VS 2017) or if we are in VS2019, we should go to Tools, then “Get Tools and Features” and install the “Azure development” workload.

We should create a new “Azure Functions” template based Project.

And select the following options:

- Azure Functions v2 (.NET Core)

- Http trigger

- Storage Account: Storage Emulator

- Authorization level: Anonymous

Then we can click create and just run it to see it working. Pressing F5 should start the Azure Functions Core Tools which enables us to test our functions locally, a convenient feature I’d say. Once starting we should see a console window with the cool Azure Functions logo flashing.

then once the startup has finished copy the function URL, which in our case is “http://localhost:7071/api/Function1”.

To try this from a client perspective we can use a browser and type “http://localhost:7071/api/Function1?name= we are going to build something better than this…” but I’d rather use a serious tool like Postman and issue a GET request with a query parameter with key “name” and the Value “we are going to build something better than this…”. This should work right away and also some tracing should appear on the Azure Functions Core Tools. The outcome in Postman should look like:

Or if you opted for the browser test “Hello, we are going to build something better than this…” – which we are going to do in short.

We just saw a “Mickey Mouse” implementation but if you are like me, that won’t satisfy you unless you add some layers in to decouple responsibility and some interfaces because, er… we like complication right? 😉 – Not really but I thought it would be good to put in code which does something simple but is still as SOLID as I can get without too much effort.

What are we going to build?

We are going to create a REST API that exposes a simple list of categories (a complex POCO with Id and Name), then the API Controller which will be the main API interface and it will delegate obtaining the Data to a Service and the Service to a Repository.

And we will stop here, so this will be completely on memory so no Database will be hurt in the process, also no Unit Of Work will Need to be implemented as well.

We will create some interfaces and configure/register them with Dependency Injection, which uses the ASP.NET Core DI basically.

I have a disclaimer which is that WordPress has somehow disabled HTLM editing so I can’t put code from GIT or have a nice “code view” in place which is… making me think of switching to another blog provider… so if you have any suggestion, I’d appreciate you mention it to me with a comment or directly.

Creating the “boilerplate”

We will start by creating the POCO (Plain Old CLR Object) class, Category. For this we can create a “Domain” Folder and inside it create a “Models” Folder where we will place this class:

public class Category

{

public int Id { get; set; }

public string Name { get; set; }

}

Next we will implement the Repository Pattern which is used normally to abstract us from the data management for a concrete Entity. Usually we encapsulate on this classes all the logic to handle data like Methods to list all the data, create, edit, delete or recover a concrete data element. These operations are usually named CRUD, for Create/Read/Update/Delete and are meant to issolate data acess operations from the rest of the application.

Internally These Methods should talk to the data storage but as we mentioned, we will create an in memory list and that will be it.

Inside the “Domain” Folder we will create a “Repositories” Folder, on it we will add a “ICategoryRepository” interface as:

public interface ICategoryRepository

{

Task<IEnumerable<Category>> ListAsync();

Task AddCategory(Category c);

Task<Category> GetCategoryById(int id);

Task RemoveCategory(int id);

Task UpdateCategory(Category c);

}

And a class CategoryRepository which implements this interface..

public class CategoryRepository : ICategoryRepository

{

private static List<Category> _categories;

public static List<Category> Categories

{

get

{

if (_categories is null)

{

_categories = InitializeCategories();

}

return _categories;

}

set { _categories = value; }

}

public async Task<IEnumerable<Category>> ListAsync()

{

return Categories;

}

public async Task AddCategory(Category c)

{

Categories.Add(c);

}

public async Task<Category> GetCategoryById(int id)

{

var cat = Categories.FirstOrDefault(t => t.Id == Convert.ToInt32(id));

return cat;

}

public async Task UpdateCategory(Category c)

{

await this.RemoveCategory(c.Id);

Categories.Add(c);

}

public async Task RemoveCategory(int id)

{

var cat = _categories.FirstOrDefault(t => t.Id == Convert.ToInt32(id));

Categories.Remove(cat);

}

private static List<Category> InitializeCategories()

{

var _categories = new List<Category>();

Random r = new Random();

for (int i = 0; i < 25; i++)

{

Category s = new Category()

{

Id = i,

Name = "Category_name_" + i.ToString()

};

_categories.Add(s);

}

return _categories;

}

}

This Repository will be talked to only by the Service, whose responsibility is to ensure the data related Tasks are performed agnostically from where the data is stored. As we mentioned, the Service could Access more than one repository if the data was more complex. But, as of now it is a mere passthrough or man-in-the-middle between the API Controller and the Repository. Just trust me on this… (or search for “service and repository layer”)

a good read on this hereThere we have all wired by an in-memory list of categories List<Category> named oportunely Categories, which is populated by by a function named InitializeCategories().

So, now we can go and create our “Services” Folder inside the “Domain” Folder…

We should add a ICategoryService interface:

public interface ICategoryService

{

Task<IEnumerable<Category>> ListAsync();

Task AddCategory(Category c);

Task<Category> GetCategoryById(int id);

Task RemoveCategory(int id);

Task UpdateCategory(Category c);

}

Which matches perfectly the functionality of the Repository, for the reasons stated, but the objectives and responsabilities are different.

We will add a CategoryService class which implements the ICategoryInterface:

public class CategoryService : ICategoryService

{

private readonly ICategoryRepository _categoryRepository;

public CategoryService(ICategoryRepository categoryRepository)

{

_categoryRepository = categoryRepository;

}

public async Task<IEnumerable<Category>> ListAsync()

{

return await _categoryRepository.ListAsync();

}

public async Task AddCategory(Category c)

{

await _categoryRepository.AddCategory(c);

}

public async Task<Category> GetCategoryById(int id)

{

return await _categoryRepository.GetCategoryById(id);

}

public async Task UpdateCategory(Category c)

{

await _categoryRepository.UpdateCategory(c);

}

public async Task RemoveCategory(int id)

{

await _categoryRepository.RemoveCategory(id);

}

}

With this we should be able to implement the Controller without any Showstopper..

Adding the necessary services wiring up

This is where everything comes together. There are some changes though, due to the fact that we will be adding dependency injection to setup the services. Basically we are following the steps from the official Microsoft how-to guide which in short are:

1. Add the following NuGet packages:

- Microsoft.Azure.Functions.Extensions

- Microsoft.NET.Sdk.Functions version 1.0.28 or later (at the moment of writing this, it was 1.0.29).

2. Register the services.

For this we have to add a Startup method to configure and add components to an IFUnctionsHostBuilder instance. For this to work we have to add a FunctionsStartup assembly attributethat specifies the type name used during startup.

On this class we should override the Configure method which has the IFUnctionsHostBuilder as a parameter and use this to configure the services.

For this we will create a c# class named Startup and put the following code:

[assembly: FunctionsStartup(typeof(ZuhlkeServerlessApp01.Startup))]

namespace ZuhlkeServerlessApp

{

public class Startup : FunctionsStartup

{

public override void Configure(IFunctionsHostBuilder builder)

{

builder.Services.AddSingleton<ICategoryService, CategoryService>();

builder.Services.AddSingleton<ICategoryRepository, CategoryRepository>();

}

}

}

Now we can use constructor injection to make our dependencies available to another class. For example the REST API which is implemented as an HTTP Trigger Azure Function will resolve the ICategoryServer on its Constructor.

For this to happen we will have to change how this HTTP Trigger is originally built (hint: It’s Static…) so we need it to call its constructor..

We will remove the Static part on the generated HTTP Trigger function and the class that contains it or either generate a complete new file.

We will either edit it or add a new one with the name “CategoriesController”. It should look like follows:

public class CategoriesController

{

private readonly ICategoryService _categoryService;

public CategoriesController(ICategoryService categoryService) //, IHttpClientFactory httpClientFactory

{

_categoryService = categoryService;

}

..

The dependencies will be resolved and registered at the application startup and resolved through the constructor each time a concrete Azure Function is called as its containing class will now we invoked due it is no longer static.

So, we cannot use Static functions if we want the benefits of dependency injection, IMHO a fair trade off.

In the code I am using DI on two places, one on the CategoriesController and another on an earlier class already shown, the CategoriesController. Did you realize the constructor injection?

Creating the REST API

As stated on the earlier section, we removed the static from the class and the functions.

Now we are ready to finish implementing the final part of the REST API… Here we will implement the following:

- Get all the Categories (GET)

- Create a new Category (POST)

- Get a Category by ID (GET)

- Delete a Category (DELETE)

- Update a Category (PUT)

Get all the Categories

Starting with getting all the categories, we will create the following function:

[FunctionName("MMAPI_GetCategories")]

public async Task<IActionResult> GetCategories(

[HttpTrigger(AuthorizationLevel.Anonymous, "get", Route = "categories")]

HttpRequest req, ILogger log)

{

log.LogInformation("Getting categories from the service");

var categories = await _categoryService.ListAsync();

return new OkObjectResult(categories);

}

Here we are associating “MMAPI_GetCategories” as the function Name, adding “MMAPI_” to relate them together in our Azure UI, as everything we deploy as a package will be put togher and using a naming convention will be convenient for ordering and grouping.

The Route for our HttpTrigger is “categories” and we associate this function to the HTTP GET verb without any Parameters.

Note here we are using async as the Service might take some time and not be inmediate.

Once we get the list of categories from our service, we return it inside the OkObjectResult

Create a new Category

For creating a new Category we will create the following function:

[FunctionName("MMAPI_CreateCategory")]

public async Task<IActionResult> CreateCategory(

[HttpTrigger(AuthorizationLevel.Anonymous, "post", Route = "categories")] HttpRequest req,

ILogger log)

{

log.LogInformation("Creating a new Category");

string requestBody = await new StreamReader(req.Body).ReadToEndAsync();

dynamic data = JsonConvert.DeserializeObject<Category>(requestBody);

var TempCategory = (Category)data;

await _categoryService.AddCategory(TempCategory);

return new OkObjectResult(TempCategory);

}

This will use the POST HTTP verb, has the same route and will expect the body of the request to contain the Category as JSON.

Our code will get the body and deserialize it – I am using the Newtonsoft Json library for this and I delegate to our Service interface the Task to add this new Category.

Then we return the object to the caller with an OkObjectResult.

Get a Category by ID

The code is as follows, using a GET HTTP verb and a custom route that will include the ID:

[FunctionName("MMAPI_GetCategoryById")]

public async Task<IActionResult> GetCategoryById(

[HttpTrigger(AuthorizationLevel.Anonymous, "get", Route = "categories/{id}")]HttpRequest req,

ILogger log, string id)

{

var categ = await _categoryService.GetCategoryById(Convert.ToInt32(id));

if (categ == null)

{

return new NotFoundResult();

}

return new OkObjectResult(categ);

}

To get a concrete Category by an ID, we have to adjust our route to include the ID, which we retrieve and hand over to the categoryservice class to recover it.

Then if found we return our already well known OkObjectResult with it or, if the Service is not able to find it, we will return a NotFoundResult.

Delete a Category

The Delete is similar to the Get by ID but using the DELETE HTTP verb and obviously a different logic. The code is as follows:

[FunctionName("MMAPI_DeleteCategory")]

public async Task<IActionResult> DeleteCategory(

[HttpTrigger(AuthorizationLevel.Anonymous, "delete", Route = "categories/{id}")]HttpRequest req,

ILogger log, string id)

{

var OldCategory = _categoryService.GetCategoryById(Convert.ToInt32(id));

if (OldCategory == null)

{

return new NotFoundResult();

}

await _categoryService.RemoveCategory(Convert.ToInt32(id));

return new OkResult();

}

The route is the same as the previous HTTP Trigger , we also do a Retrieval of the Category by Id. Then the change happens if we find it, then we ask the Service to remove the category.

Once this is complete we return the OkResult. Without any category as we have just deleted it.

Update a Category (PUT)

The Update process is similar as well to the delete with some changes, Code is as follows:

[FunctionName("MMAPI_UpdateCategory")]

public async Task<IActionResult> UpdateCategory(

[HttpTrigger(AuthorizationLevel.Anonymous, "put", Route = "categories/{id}")]HttpRequest req,

ILogger log, string id)

{

var OldCategory = await _categoryService.GetCategoryById(Convert.ToInt32(id));

if (OldCategory == null)

{

return new NotFoundResult();

}

string requestBody = await new StreamReader(req.Body).ReadToEndAsync();

var updatedCategory = JsonConvert.DeserializeObject<Category>(requestBody);

await _categoryService.UpdateCategory(updatedCategory);

return new OkObjectResult(updatedCategory);

}

Here we use the PUT HTTP Verb, receive an ID that indicates us the category we want to update and in the body, just as with the Creation of a new category, we have the Category in JSON.

We check that the Category exists and then we deserialize the body into a Category and delegate to the service Updating it.

Finally, we return the OkObjectResult containing the updated category.

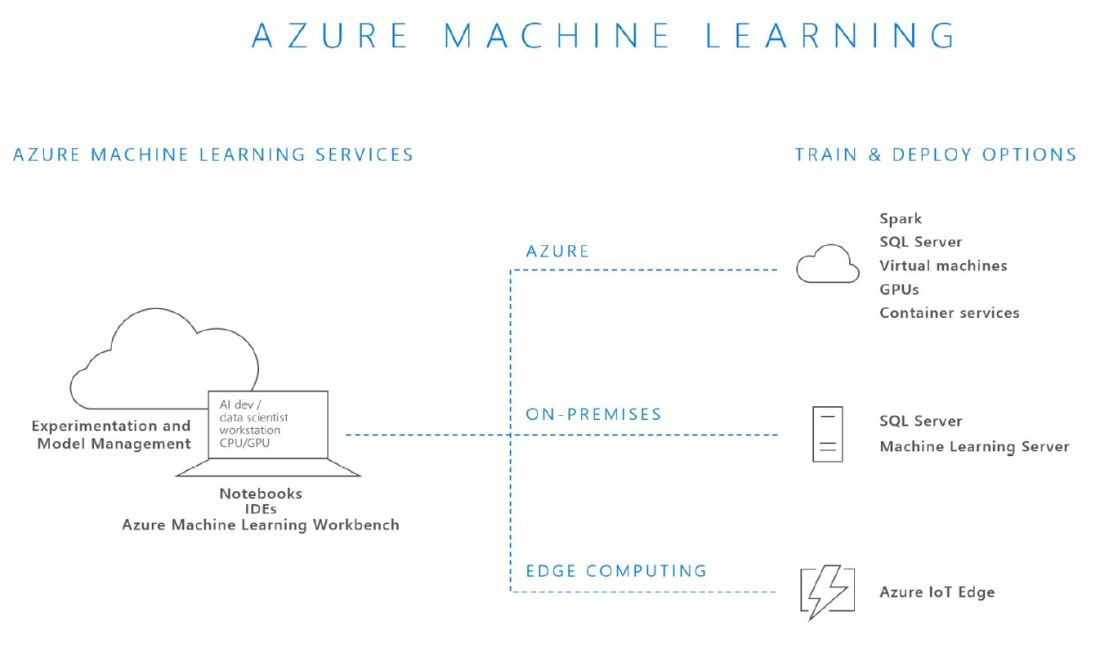

Deploying the REST API

We could now test it in local as we did at the beginning but what’s the fun of it, right?

So, in order to deploy it to the cloud we will Need to have a Microsoft Account logged in our Visual Studio that has an Azure subscription associated to it.

We will build it in release and then go to our Project, right click and choose “Publish..”

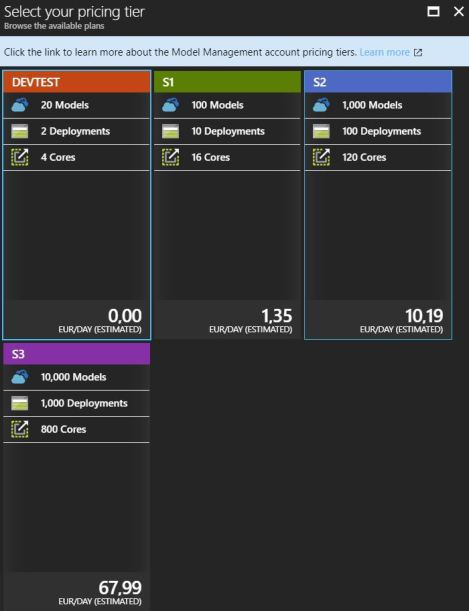

We will choose “Azure Function App” as publish target and “create new”. Also it is recommended to mark the “Run from package file” which is the recommended option.

And click on “create profile”. There we will be presented into the profile configuration, where I recommend we create all the resources and do not reuse.

Regarding the Hosting Plan, be sure to check the “Consumption plan” which has like 1Milion of API Calls before any charge is made, which is convenient for testing purposes I’d say.

Note that when I publish this post this app service will be long gone so no issues in Posting it :).

Once configured we should click on “Create” and wait for all the resources to be created, once this is done the Project will be built and deployed to Azure.

Checking our Azure REST API

Now it is a good moment to move to our favorite web browser and type portal.azure.com.

We can move right away to the resource group we created and into the App Service created. Sometimes, when Publishing the Functions are not populated.

if that happens, don’t panic, just re-publish it again. The Publish view should look like:

A bit after, we should be able to see our services in our App Service:

To try this quickly, we should go to the GetCategories, click on the “Get function URL” and copy it.

Then we can go into our friend PostMan, set a GET HTTP request and paste the URL we just copied in the, er, URL Field. And click Send.

We should see something like:

We could also test this on a web browser and on the Test section on the Azure portal, but I like to test Things as close as customer conditions.

As next steps I throw you the challenge to test this Serverless REST Api we just build in Azure with Azure Function Apps. I’d propose to try to create a new Category such as “999” – “.NET Core 3.0” then retrieve it by ID, then modify it to “.NET 5.0”, retrieve it and end up deleting it and trying to retrieve it again just to get the “NotFoundResult”.

So, what do you think about Azure Functions and what we just have build?

Next..

And that’s it for today, on our next entry we will jump into API Management .

Happy Coding & until the next entry!